Introduction

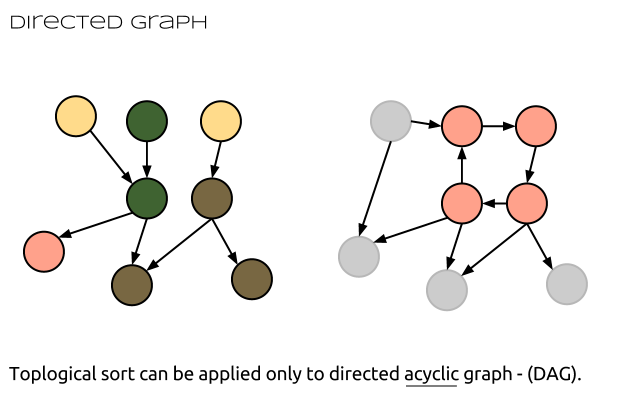

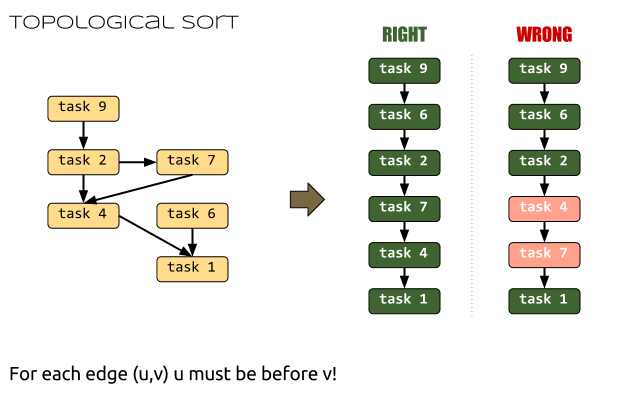

We saw how to find the shortest path in a graph with positive edges using the Dijkstra’s algorithm. We also know how to find the shortest paths from a given source node to all other nodes even when there are negative edges using the Bellman-Ford algorithm. Now we’ll see that there’s a faster algorithm running in linear time that can find the shortest paths from a given source node to all other reachable vertices in a directed acyclic graph, also known as a DAG.

Because the DAG is acyclic we don’t have to worry about negative cycles. As we already know it’s pointless to speak about shortest path in the presence of negative cycles because we can “loop” over these cycles and practically our path will become shorter and shorter.

Thus we have two problems to overcome with Dijkstra and the Bellman-Ford algorithms. First of all we needed only positive weights and on the second place we didn’t want cycles. Well, we can handle both cases in this algorithm. Continue reading Computer Algorithms: Shortest Path in a Directed Acyclic Graph